import torch

import torch.nn as nn

import torch.optim as optim

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import confusion_matrix, accuracy_score

from sklearn.decomposition import PCA

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

"""

torch:PyTorch深度学习框架,用于构建和训练神经网络。

torch.nn:包含了构建神经网络所需的各种层和损失函数。

torch.optim:提供了各种优化算法,如Adam、SGD等。

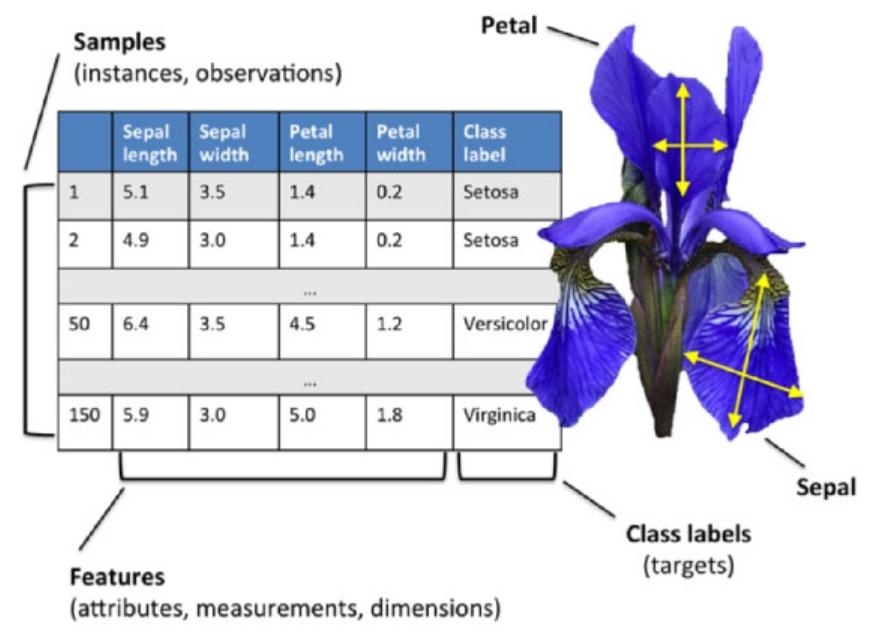

sklearn.datasets.load_iris:用于加载鸢尾花数据集。

sklearn.model_selection.train_test_split:用于将数据集划分为训练集和测试集。

sklearn.preprocessing.StandardScaler:用于对数据进行标准化处理。

sklearn.metrics.confusion_matrix和sklearn.metrics.accuracy_score:用于评估模型的性能。

sklearn.decomposition.PCA:用于对数据进行主成分分析(PCA)降维。

numpy:用于数值计算。

matplotlib.pyplot:用于绘制图表。

seaborn:基于matplotlib的可视化库,提供更美观的图表样式。

"""

iris = load_iris()

X = iris.data

y = iris.target

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, stratify=y

)

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

X_train_tensor = torch.FloatTensor(X_train_scaled)

y_train_tensor = torch.LongTensor(y_train)

X_test_tensor = torch.FloatTensor(X_test_scaled)

y_test_tensor = torch.LongTensor(y_test)

class IrisClassifier(nn.Module):

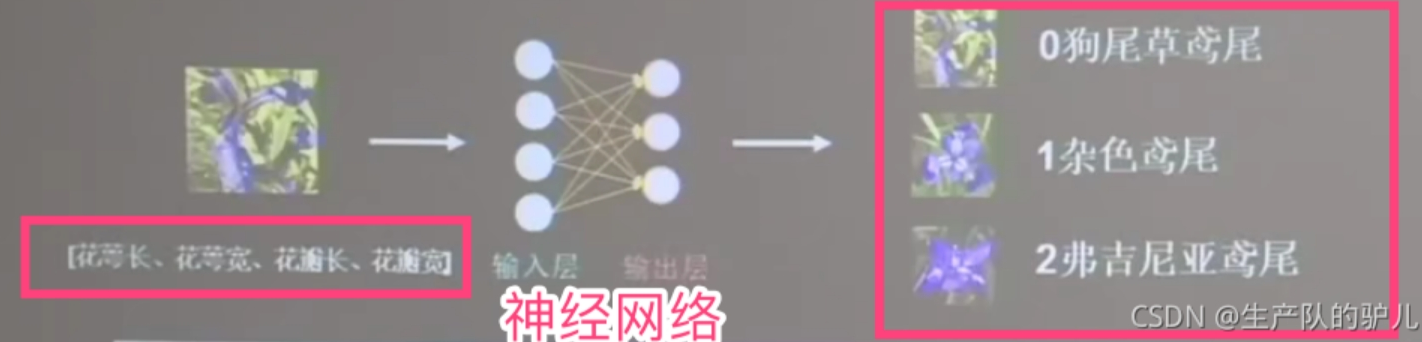

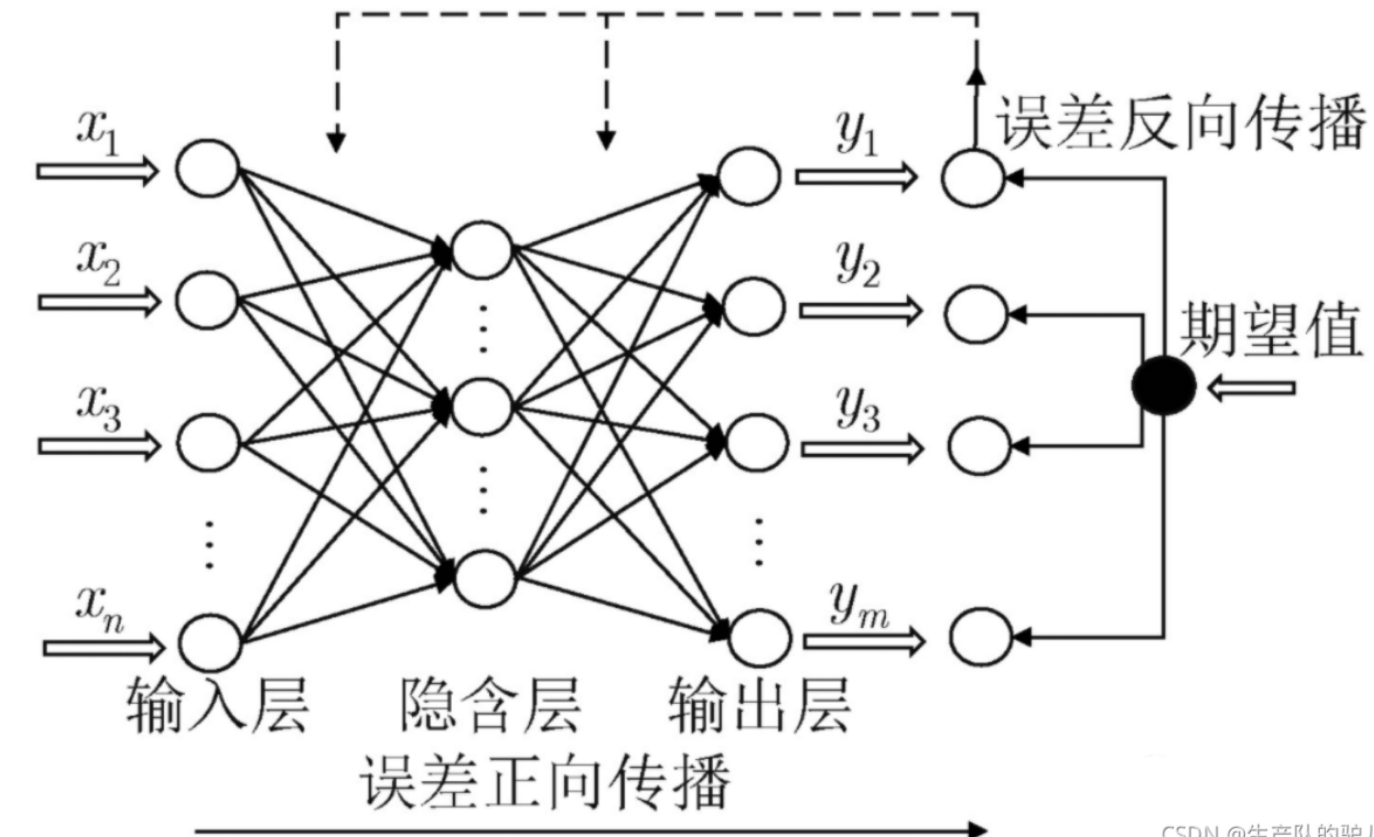

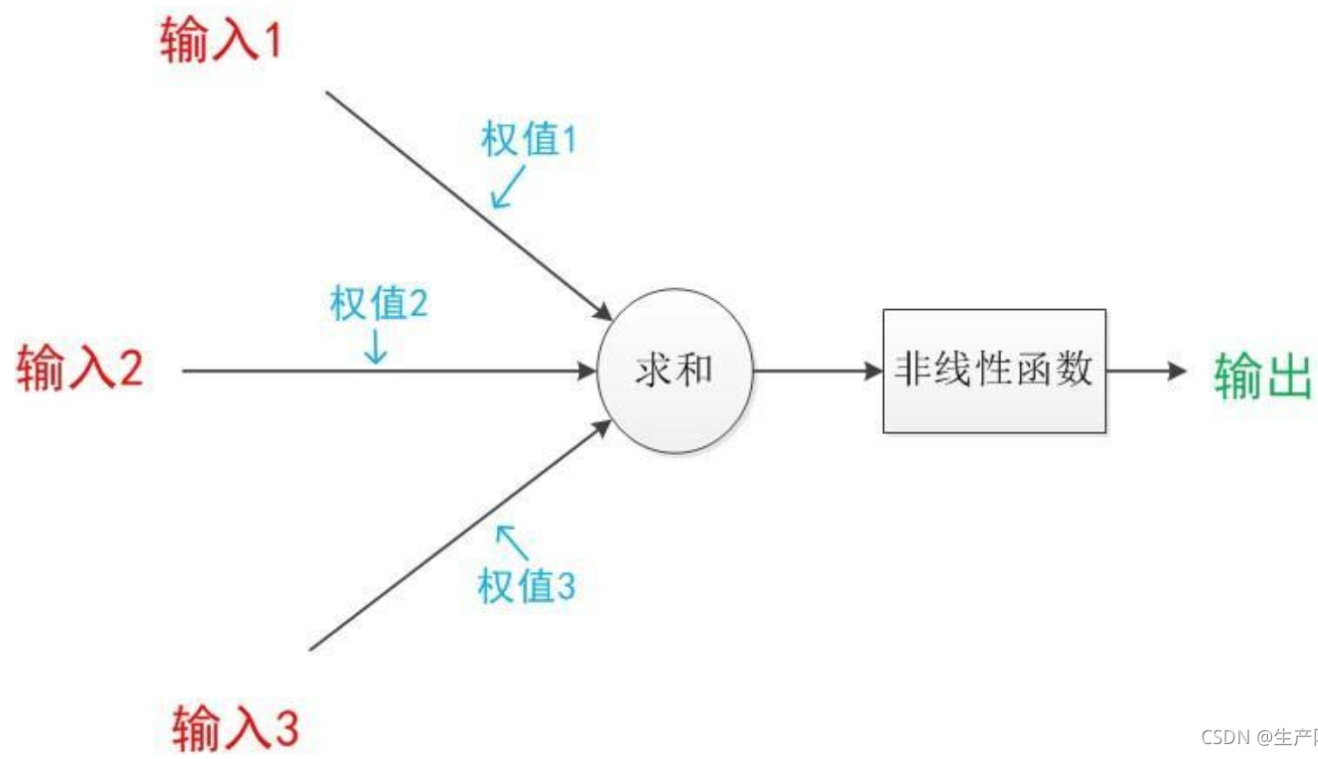

"""

定义一个名为 IrisClassifier 的神经网络模型,继承自 nn.Module。

该模型包含三个全连接层和一个 ReLU 激活函数。forward 方法定义了模型的前向传播过程,

输入数据依次通过三个全连接层和 ReLU 激活函数,最终输出预测结果。

"""

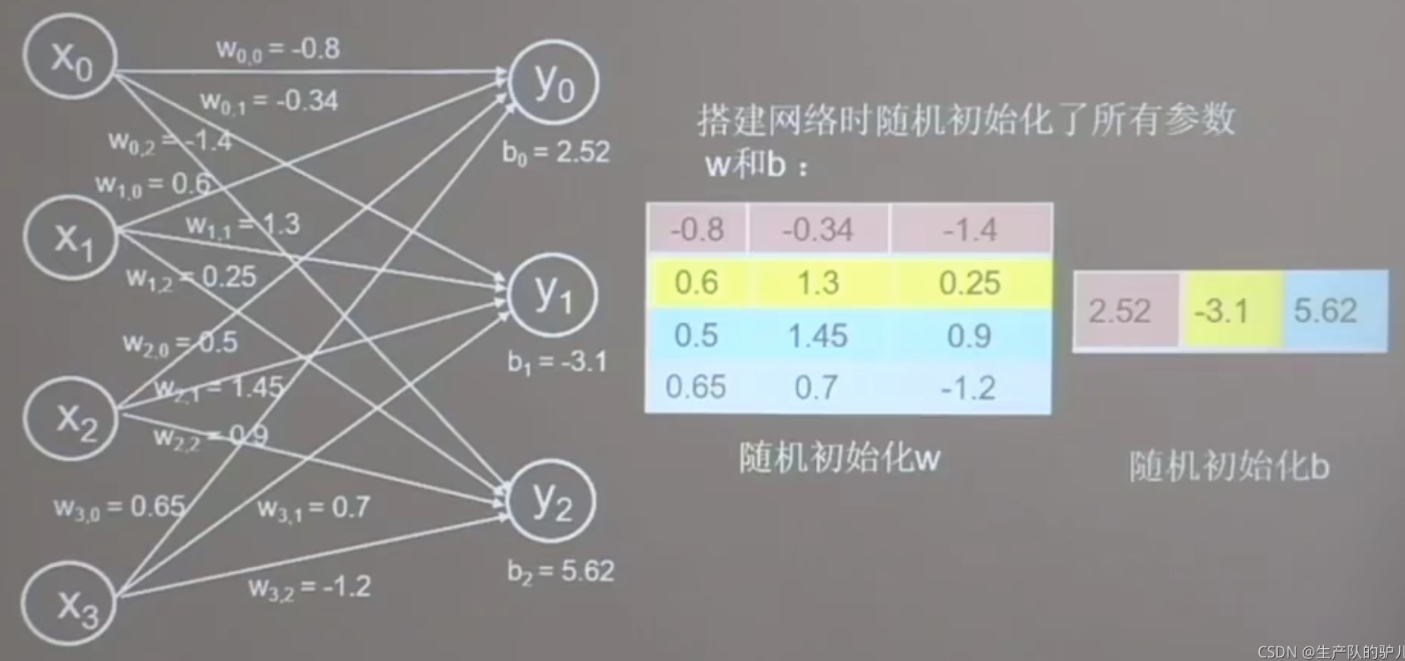

def __init__(self):

super().__init__()

self.layer1 = nn.Linear(4, 10)

self.layer2 = nn.Linear(10, 10)

self.output = nn.Linear(10, 3)

self.relu = nn.ReLU()

def forward(self, x):

x = self.relu(self.layer1(x))

x = self.relu(self.layer2(x))

x = self.output(x)

return x

model = IrisClassifier()

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.01)

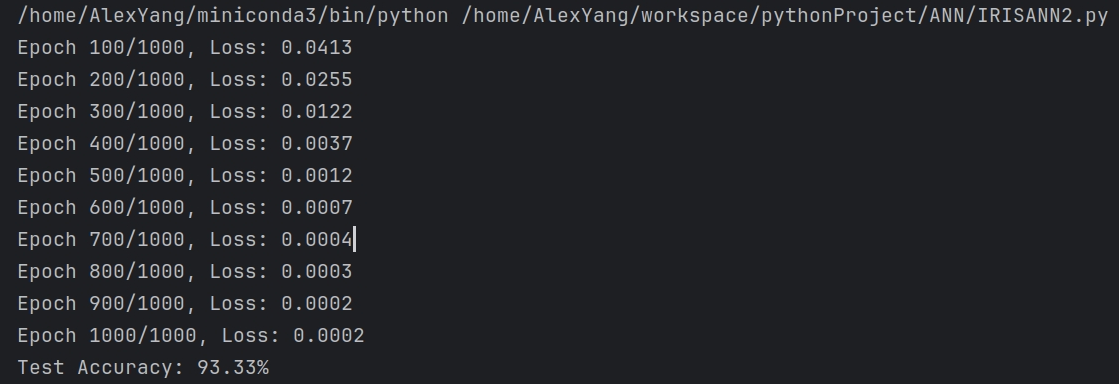

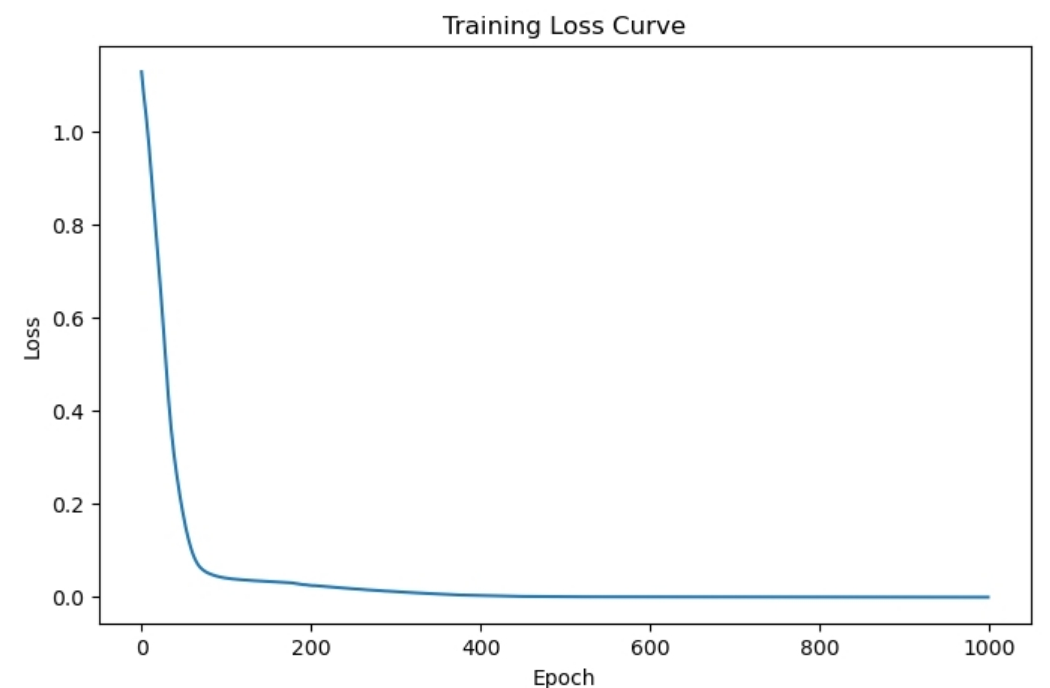

epochs = 1000

losses = []

for epoch in range(epochs):

optimizer.zero_grad()

outputs = model(X_train_tensor)

loss = criterion(outputs, y_train_tensor)

loss.backward()

optimizer.step()

losses.append(loss.item())

if (epoch + 1) % 100 == 0:

print(f"Epoch {epoch+1}/{epochs}, Loss: {loss.item():.4f}")

plt.figure(figsize=(8, 5))

plt.plot(losses)

plt.xlabel("Epoch")

plt.ylabel("Loss")

plt.title("Training Loss Curve")

plt.show()

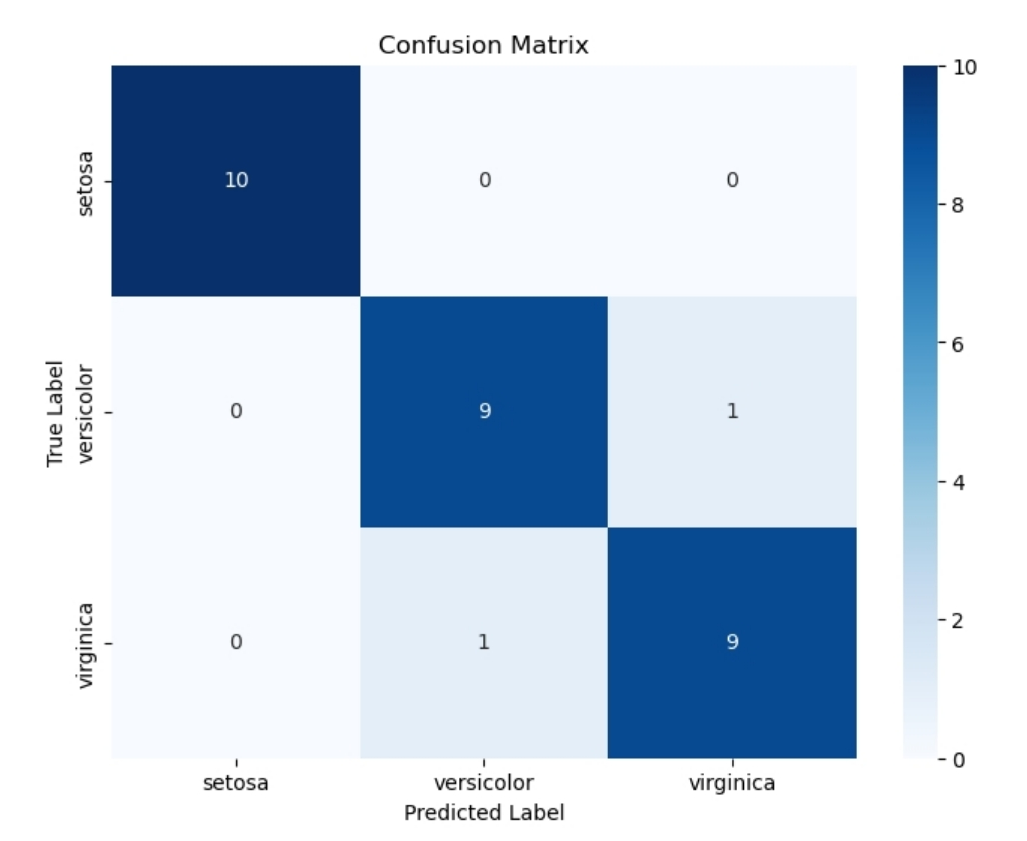

with torch.no_grad():

y_pred = model(X_test_tensor)

predicted_labels = torch.argmax(y_pred, dim=1)

accuracy = accuracy_score(y_test, predicted_labels)

print(f"Test Accuracy: {accuracy:.2%}")

cm = confusion_matrix(y_test, predicted_labels)

plt.figure(figsize=(8, 6))

sns.heatmap(

cm,

annot=True,

fmt="d",

cmap="Blues",

xticklabels=iris.target_names,

yticklabels=iris.target_names,

)

plt.xlabel("Predicted Label")

plt.ylabel("True Label")

plt.title("Confusion Matrix")

plt.show()

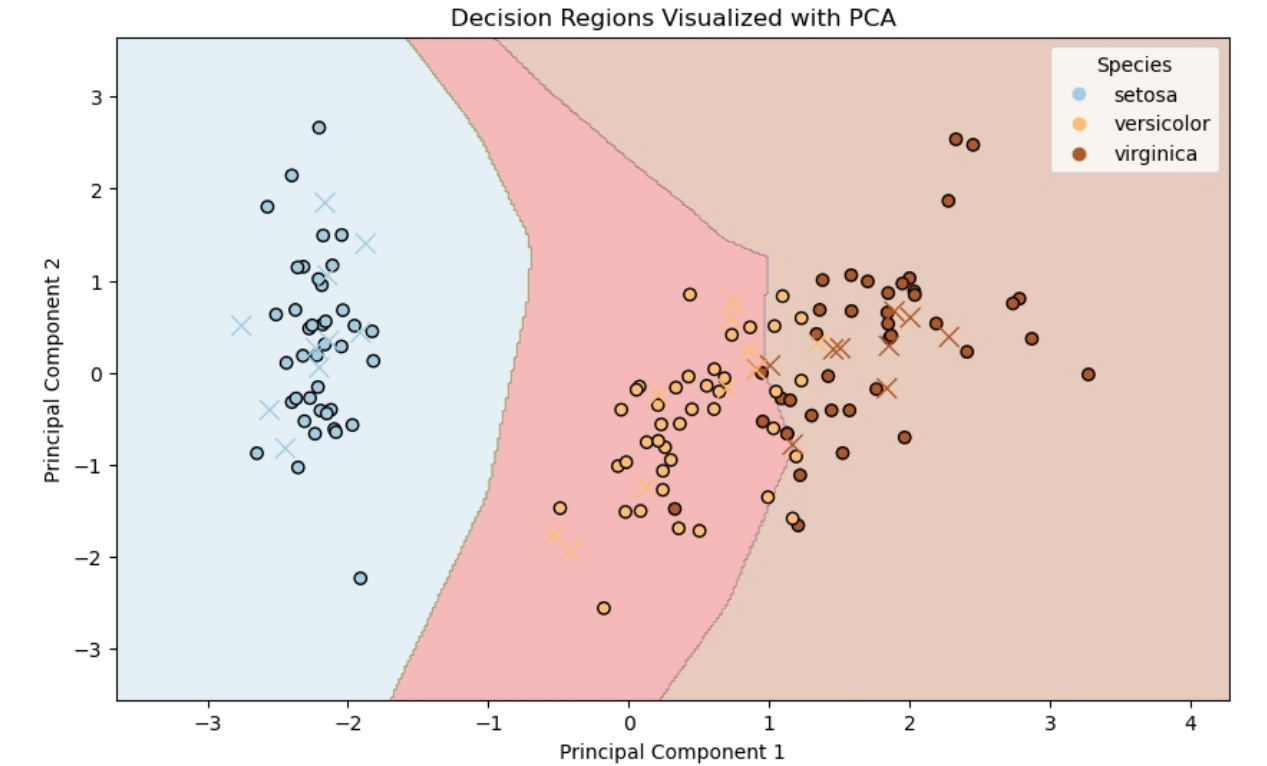

pca = PCA(n_components=2)

X_train_pca = pca.fit_transform(X_train_scaled)

X_test_pca = pca.transform(X_test_scaled)

h = 0.02

x_min, x_max = X_train_pca[:, 0].min() - 1, X_train_pca[:, 0].max() + 1

y_min, y_max = X_train_pca[:, 1].min() - 1, X_train_pca[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

mesh_points = np.c_[xx.ravel(), yy.ravel()]

X_inverse = pca.inverse_transform(mesh_points)

X_inverse_tensor = torch.FloatTensor(X_inverse)

with torch.no_grad():

outputs = model(X_inverse_tensor)

Z = torch.argmax(outputs, dim=1).numpy()

Z = Z.reshape(xx.shape)

plt.figure(figsize=(10, 6))

plt.contourf(xx, yy, Z, alpha=0.3, cmap="Paired")

scatter = plt.scatter(

X_train_pca[:, 0],

X_train_pca[:, 1],

c=y_train,

cmap="Paired",

edgecolors="k",

label="Train",

)

plt.scatter(

X_test_pca[:, 0],

X_test_pca[:, 1],

c=y_test,

cmap="Paired",

marker="x",

s=100,

linewidth=1,

label="Test",

)

plt.xlabel("Principal Component 1")

plt.ylabel("Principal Component 2")

plt.legend(

handles=scatter.legend_elements()[0],

labels=iris.target_names.tolist(),

title="Species",

)

plt.title("Decision Regions Visualized with PCA")

plt.show()

|